What Is an MCP Server?

An MCP server is what lets AI models like Claude and ChatGPT actually use your tools. What it is, how it works, MCP server vs API, what you can plug in, and where it's headed.

MCP, the Model Context Protocol, came out of Anthropic in late 2024. It fixes a problem that sounds too dumb to be real: out of the box, large language models are bad at calling APIs.

Without help (careful prompts, a pile of glue code), a model you ask to "go pull the open invoices" will happily write you a paragraph about invoices instead of fetching them.

An MCP server is the thing that fixes that. It sits between an AI model and a set of tools, and it tells the model which tools exist, when to use them, how to call them, and what comes back, in a shape the model can act on.

This is the explainer for anyone who's seen "MCP server" in a config file, a product launch, or a Slack thread and quietly thought "wait, what is that." We'll cover what an MCP server actually is, MCP server vs MCP client, how it works today, why it's wired up the way it is, MCP server vs API, what you can plug in, and where this is going.

What is an MCP server?

An MCP server is a small program that exposes a set of tools (functions a model is allowed to call) over a standard protocol.

Think of it as a universal adapter. Once a tool implements MCP, any model that speaks MCP (Claude, ChatGPT, Cursor, and a growing list) can use it, and the responses come back in a consistent format.

If you've written software, the cleanest mental model is an API for your APIs: a thin layer whose whole job is to make a normal API legible to a language model.

Concretely, an MCP server answers four questions for the model:

- Which tools are available?

- When should I reach for each one?

- How do I call them, what arguments, what shape?

- What do I get back if I do?

MCP server vs MCP client

Quick disambiguation, because these two get muddled.

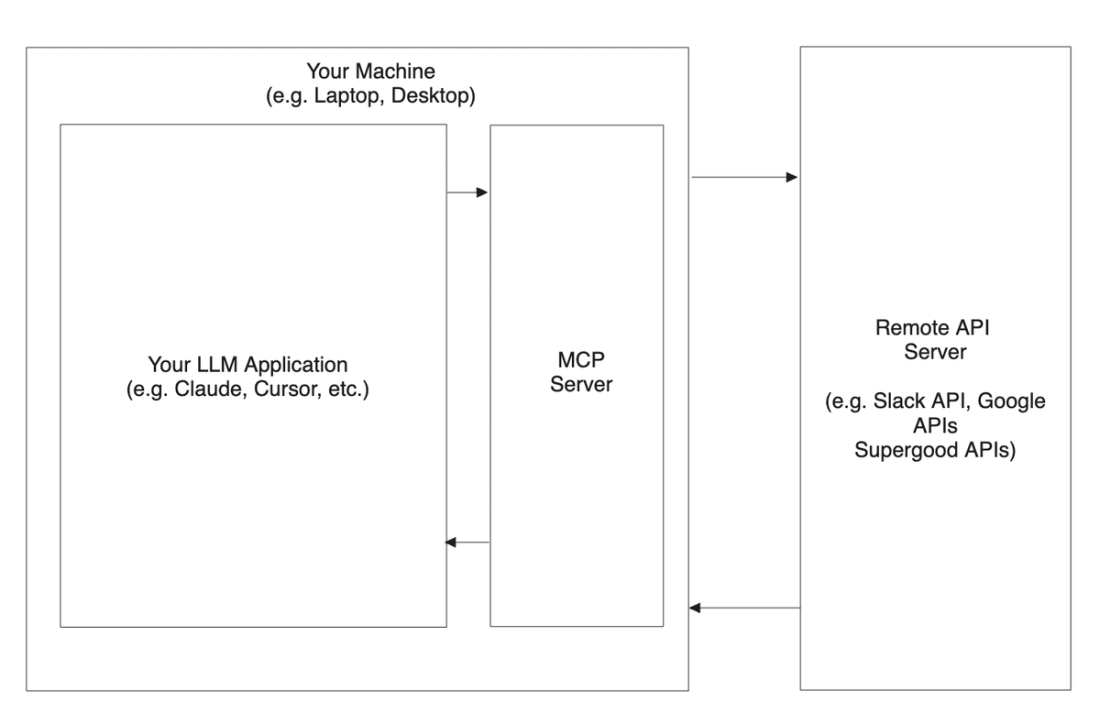

The client is the app the model lives in: Claude Desktop, Cursor, Claude Code, ChatGPT. The server is the thing exposing tools.

One client can connect to many servers at once: a GitHub MCP server, a Postgres MCP server, your company's internal MCP server, all available in the same chat. You install (or point at) servers; the client wires them into the model.

How does an MCP server work today?

For most of MCP's short life, an MCP server has been a process that runs locally, on the same machine where you're using the model.

Use Claude Desktop or Cursor on your Mac, and the MCP server runs quietly in the background on your Mac, talking to the model over a local channel and out to whatever it connects to.

That's changing fast. Remote (hosted) MCP servers are a thing now: a server you connect to over the network, with OAuth instead of a local config file. ChatGPT's "connectors" are basically the same idea.

Expect more of the ecosystem to move that way. The local-only setup was just where it started.

Why is it wired up this way?

If you've shipped code in the last decade, "a local server whose job is to call a remote server" looks deeply weird. It is. But it falls out of a real constraint, so here's the constraint.

LLMs are extraordinary at following patterns in language: human language, programming language, whatever. What they're not reliably good at: getting asked a very human question, switching to behave like a computer for a moment mid-sentence to make a precise API call, then switching back to conversational.

They can kinda do it. "Kinda" doesn't cut it in production.

So the model needs outside help. In normal software, outside help means calling an external API. But models don't have the plumbing to make an API call on their own.

To give them that, you need an API to call APIs. The MCP server is that: a small piece of infrastructure you drop in next to the model that handles the human-to-machine-and-back handoff, without re-architecting the model itself.

MCP server vs API, when do you use each?

An MCP server and an API are different layers of the same stack.

An API is for code: a developer building an integration. An MCP server is for models: an agent deciding, at runtime, what to do.

Most MCP servers wrap an API underneath. The server just makes that API discoverable and callable by a language model, with the descriptions and guardrails a model needs.

Rough rule: if a human engineer is building a fixed integration, ship an API (or use the one that exists). If an AI agent needs to pick from a toolbox and act, give it an MCP server.

What can you actually plug in?

Anything with an API can become an MCP server, and plenty already have one: GitHub, Slack, Postgres, Notion, your internal services.

The more interesting question is the software that doesn't expose a usable API: the vertical and legacy systems your customers actually run their business on. Yardi, ServiceTitan, Toast, AppFolio, and hundreds more.

Most of those will never ship an official MCP server. That gap is exactly where the value is.

What's next?

The endgame is obvious: any model, calling any tool, reliably, with no friction. That's a real win for every industry that runs on software, which is all of them.

MCP isn't there yet, and it's still a little weird. But it's the best first step in that direction, and the rough edges (remote servers, OAuth, registries, better tool descriptions) are getting sanded down quickly.

Supergood was built for this future, the one where demand for models needing access to a product's API outruns the supply of APIs that exist.

We generate and actively maintain APIs and MCP servers for products that don't have them, using human-in-the-loop code generation paired with observability, so engineering teams don't get stuck owning the maintenance.